Project Overview

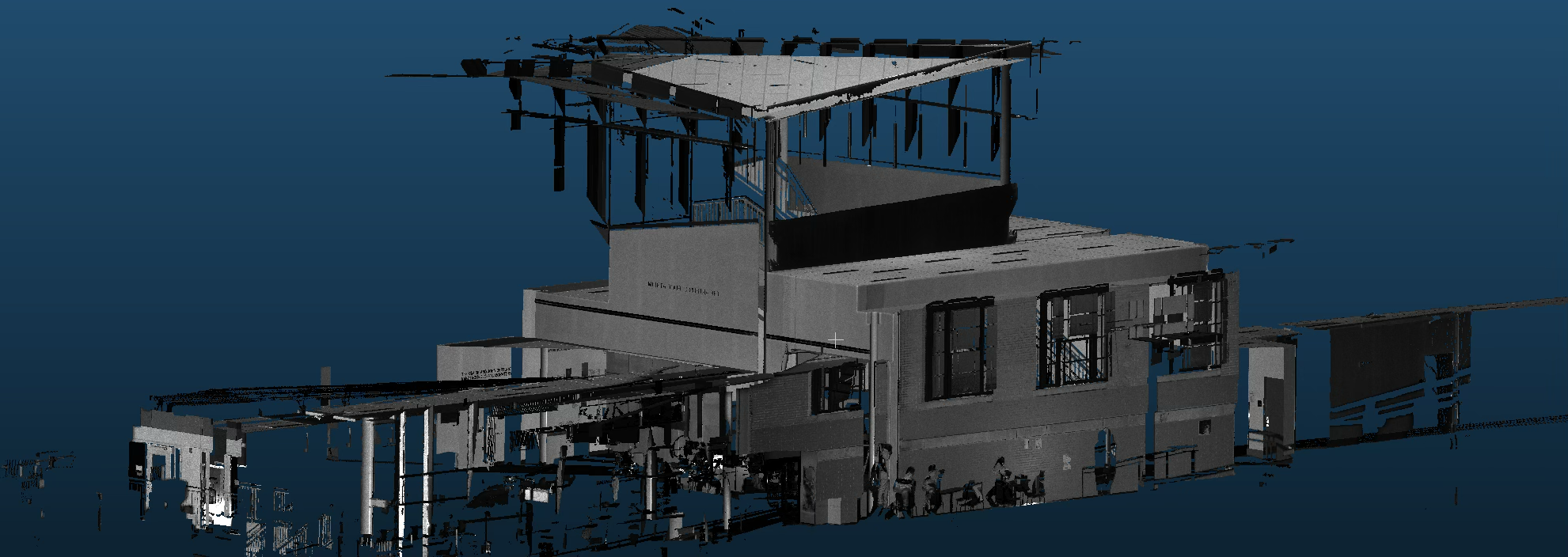

Building information modeling and infrastructure documentation face significant challenges when converting massive point cloud datasets from LiDAR and laser scanning into actionable digital models. Our data-driven machine learning system tackles this complexity by automatically recognizing and extracting 3D objects from cluttered point cloud scenes, transforming raw spatial data into structured building elements suitable for BIM and digital twin workflows. The technology employs deep neural networks specifically designed for 3D geometric data, enabling robust identification of windows, doors, beams, columns, piping, and custom architectural features despite varying point densities, occlusion, and sensor noise. This innovative approach eliminates the labor-intensive manual segmentation process that traditionally requires specialized expertise and weeks of processing time.

The system revolutionizes construction documentation, facility management, and infrastructure inspection by providing automated, accurate building element extraction that directly feeds into CAD systems and BIM platforms. Architecture and engineering firms benefit from accelerated as-built model generation, while facility managers gain comprehensive asset inventories for maintenance planning and space optimization. The technology enables construction progress monitoring, quality control verification, and historic preservation documentation with unprecedented accuracy and efficiency. Our research team, led by Professor Kenji Shimada and graduate researcher Louise Xie, combines expertise in computer vision, geometric modeling, and construction technology, actively seeking collaborations with construction companies, technology firms, and research institutions to advance 3D perception capabilities and deploy these solutions across diverse building and infrastructure applications.