Project Overview

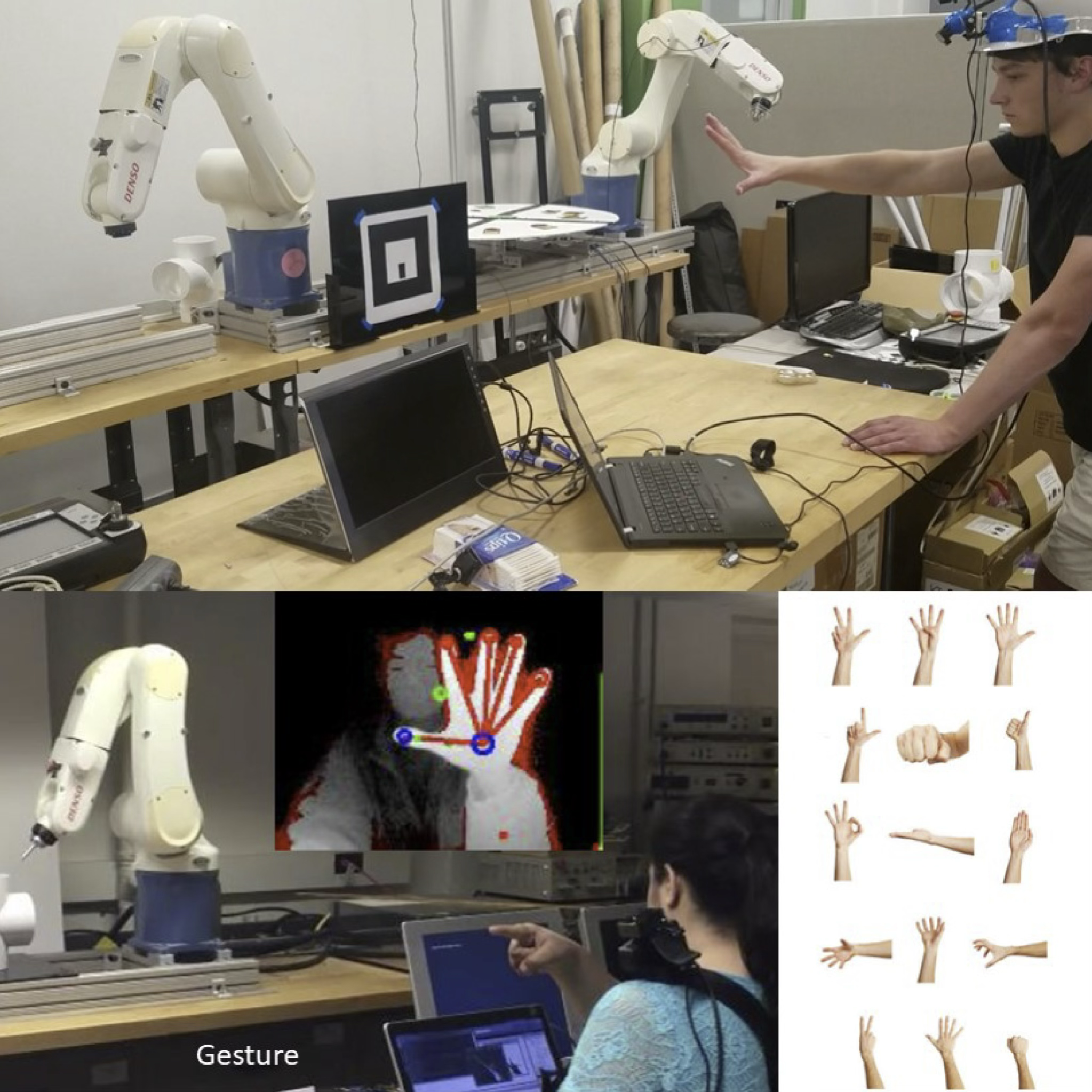

Traditional robot programming requires specialized knowledge and complex teach pendants, creating barriers to rapid deployment and limiting accessibility in factory environments. We develop gesture-driven programming interfaces that enable intuitive robot motion teaching through natural hand movements, combining real-time computer vision with geometric context from CAD models to translate human intent into safe, precise robot trajectories. Our system integrates 3D hand tracking, machine learning-based gesture classification, and multi-sensor positioning to create a seamless bridge between human demonstration and robot execution. The technical challenge lies in achieving low-latency recognition while maintaining safety constraints and workspace boundaries, enabling both industrial robot programming and research-grade UAV control without traditional scripting or pendant-based interfaces.

This research transforms manufacturing automation and scientific research by dramatically reducing setup time and operator training requirements while improving precision and safety. In factory automation, our gesture-based interface enables rapid production line reconfiguration, intuitive quality control procedures, and simplified maintenance operations, making advanced robotics accessible to operators without extensive programming backgrounds. For UAV applications, gesture control provides precise positioning for scientific studies, inspection tasks, and emergency operations where traditional remote control lacks the nuance required for delicate maneuvers. Professor Kenji Shimada leads our multidisciplinary team combining expertise in computer vision, human-robot interaction, and industrial automation, collaborating with manufacturing companies and service industries to deploy natural user interfaces that enhance productivity, reduce learning curves, and establish new paradigms for human-robot collaboration.